Introducing PATINA

AI image models have become extremely good at producing something that looks like stone, ceramic, brushed metal, leather, or concrete, but these images are not readily usable in a traditional CGI workflow - they have baked-in specular highlights, occlusion, perspective distortions, and any number of other qualities that make it visually appealing on its own, but hard to use in rendering. PATINA aims to bridge that gap, empowering users to create high-resolution, ornately detailed PBR maps from final rendered images. Click here to go straight to the model and try it yourself!

At the core of PATINA is a modified FLUX.2 [klein] backbone. FLUX.2 is built as a latent flow-matching system that couples a vision-language model with a rectified flow transformer, and the klein variant is made specifically for image transformation. That made it a strong starting point for a model that needed to reason about layout, structure, and spatial relationships while still being practical to train and deploy.

In our experiments, klein already had a surprisingly strong prior over geometry. What it did not have, at least not strongly enough on its own, was a grounded understanding of materials. So we added an adapter with a DINOv2 backbone to translate semantic segmentation information into something the model could use for material prediction.

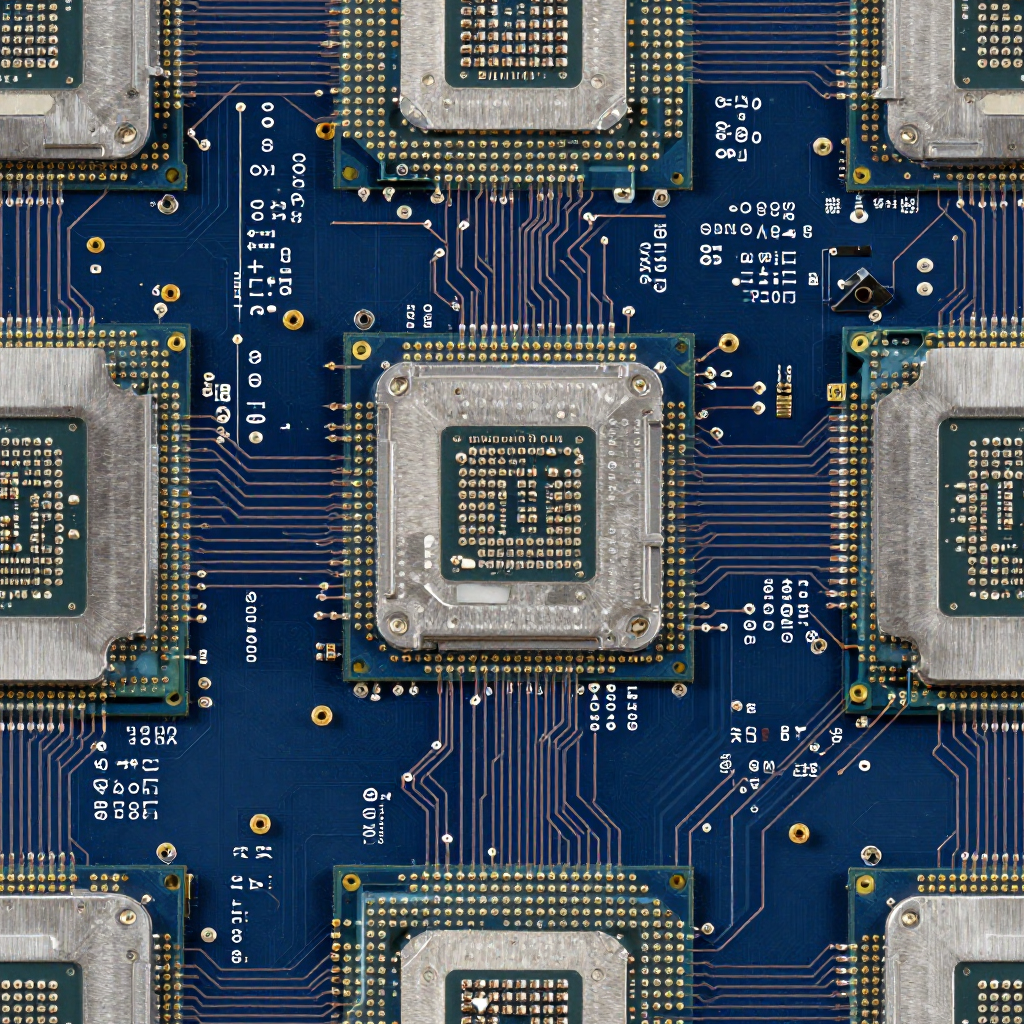

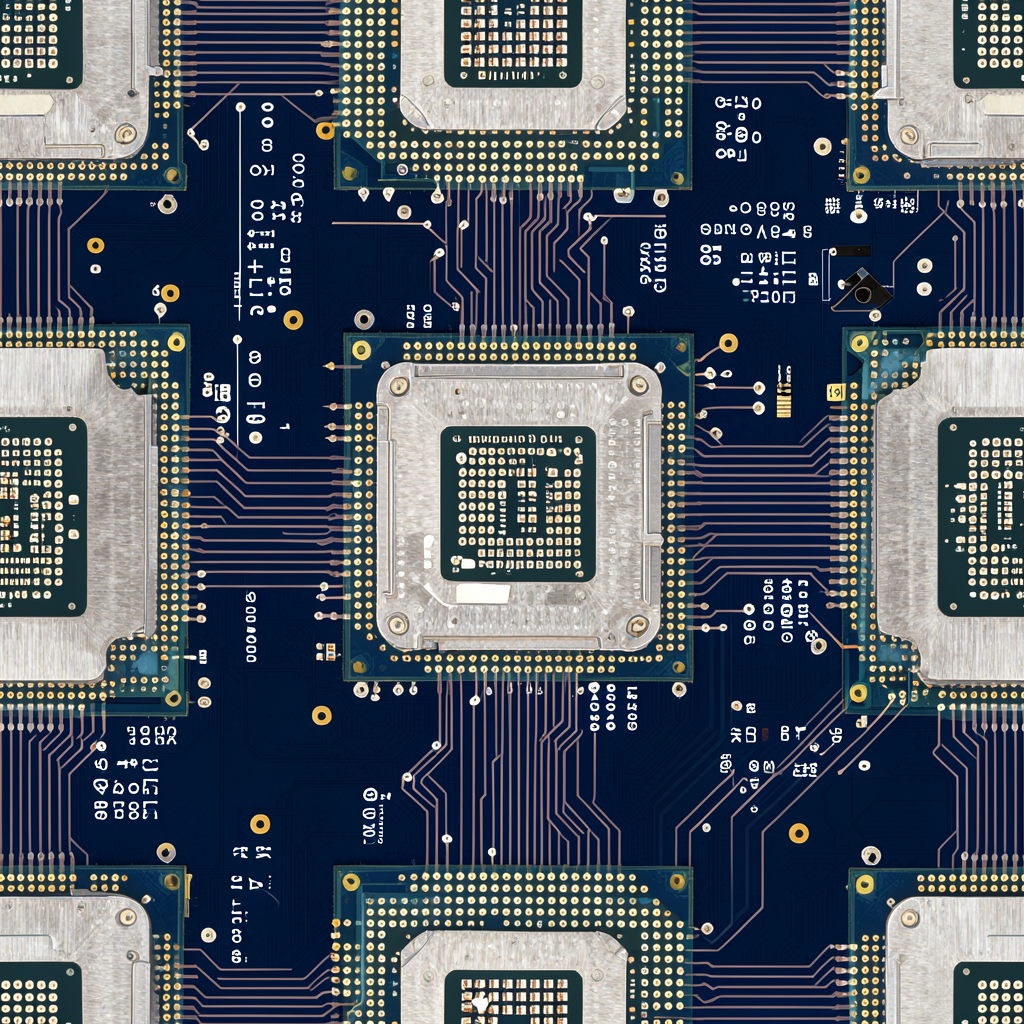

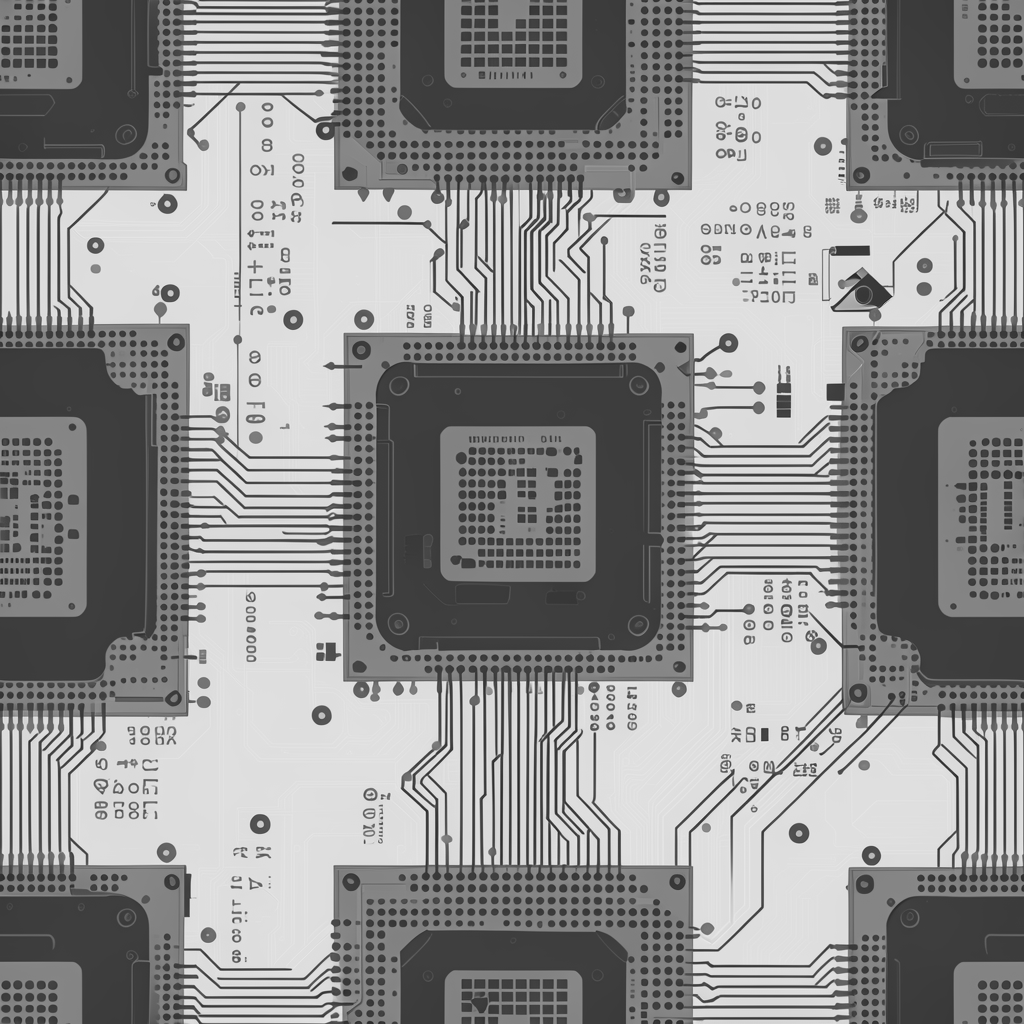

In order from top-left; render, basecolor (albedo), normal, roughness, metalness, height (displacement)

Why materials are harder than geometry

Single-image geometry prediction has advanced quickly. Architectures like DPT and MiDaS pushed monocular depth forward, while more recent diffusion-based systems like Marigold and GeoWizard showed how generative priors can improve detail and zero-shot generalization.

In order from top-left; render, MiDaS, Depth Anything v2, Marigold Depth, PATINA (Ours)

Geometry is only part of the story, however - a depth map can be correct while saying nothing about whether a highlight came from gloss, metalness, or micro-relief, and a normal map can explain orientation without explaining why two surfaces with similar shape reflect light in totally different ways. PATINA lives closer to SVBRDF estimation than to pure geometry prediction: it is trying to recover the latent controls a renderer needs in order to recreate appearance under many possible lighting conditions, not just infer where a surface sits in 3D.

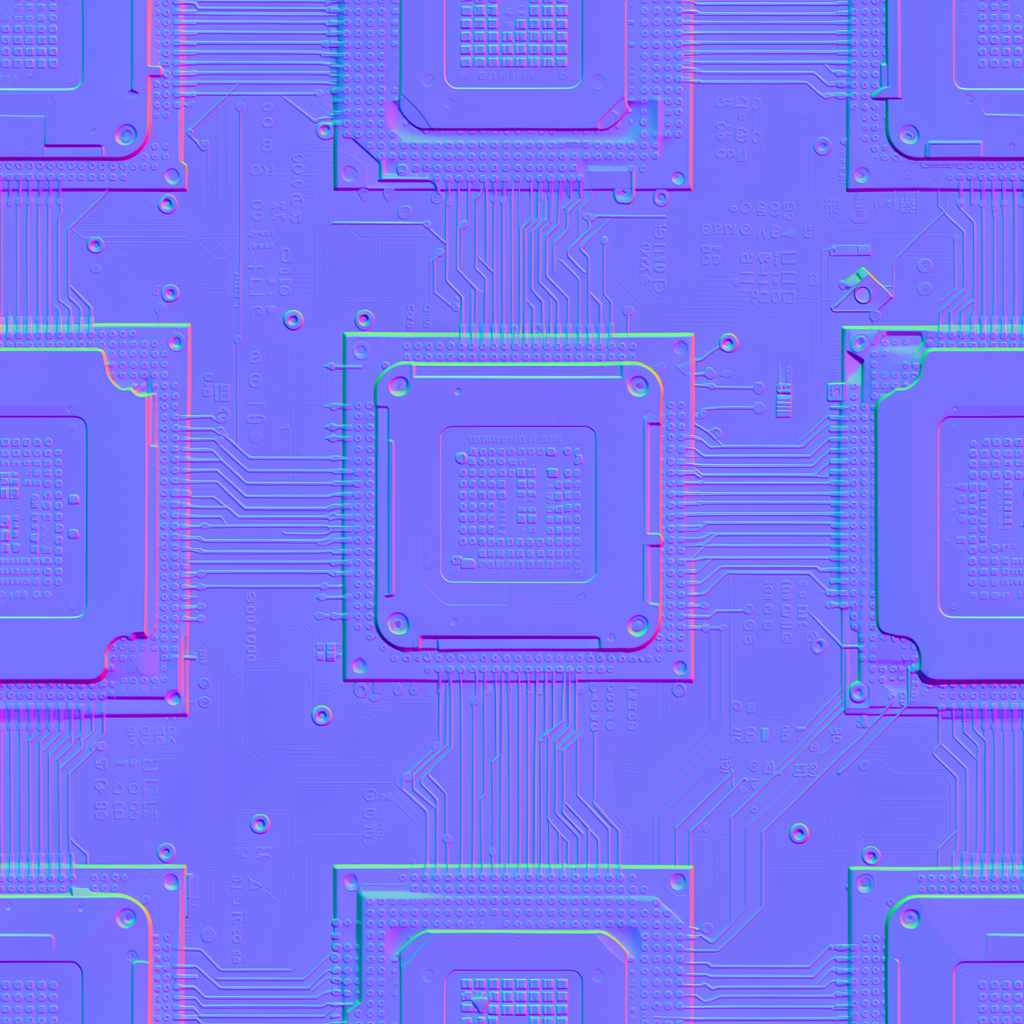

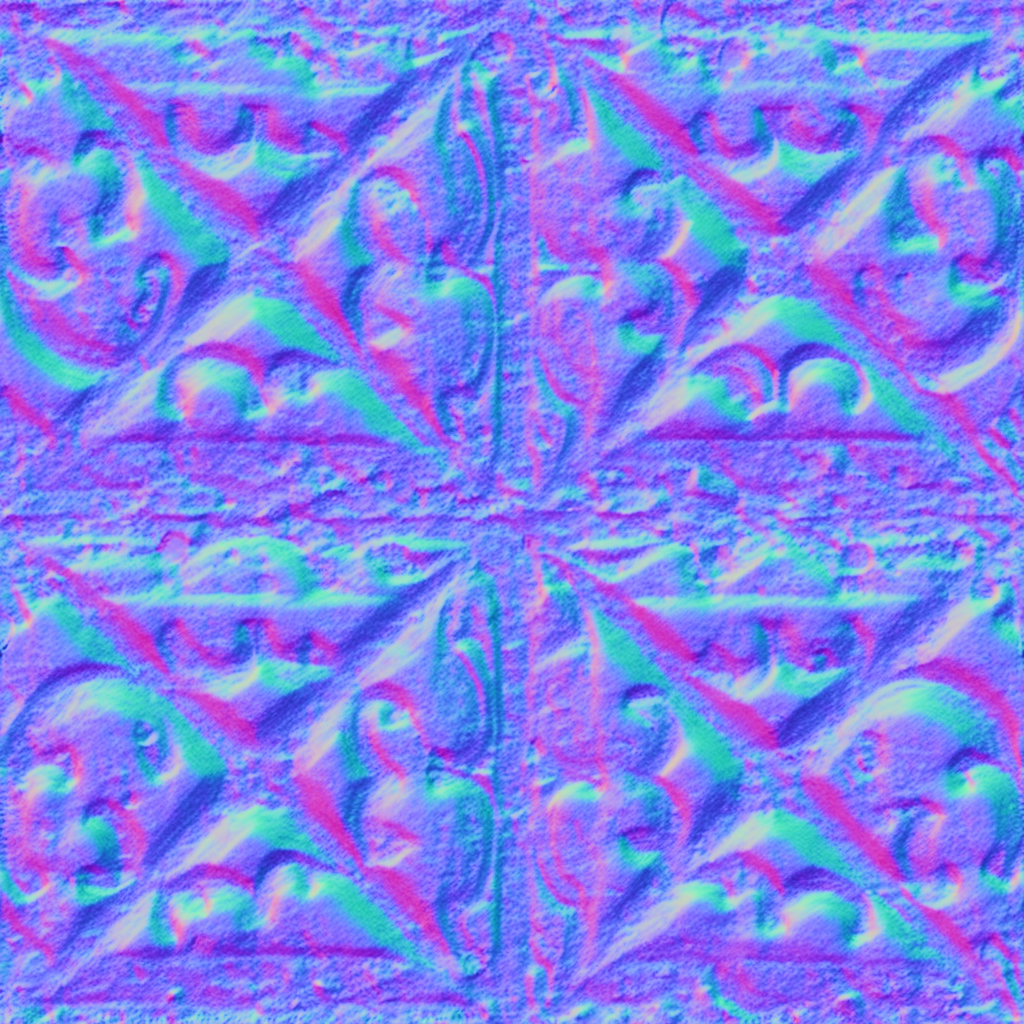

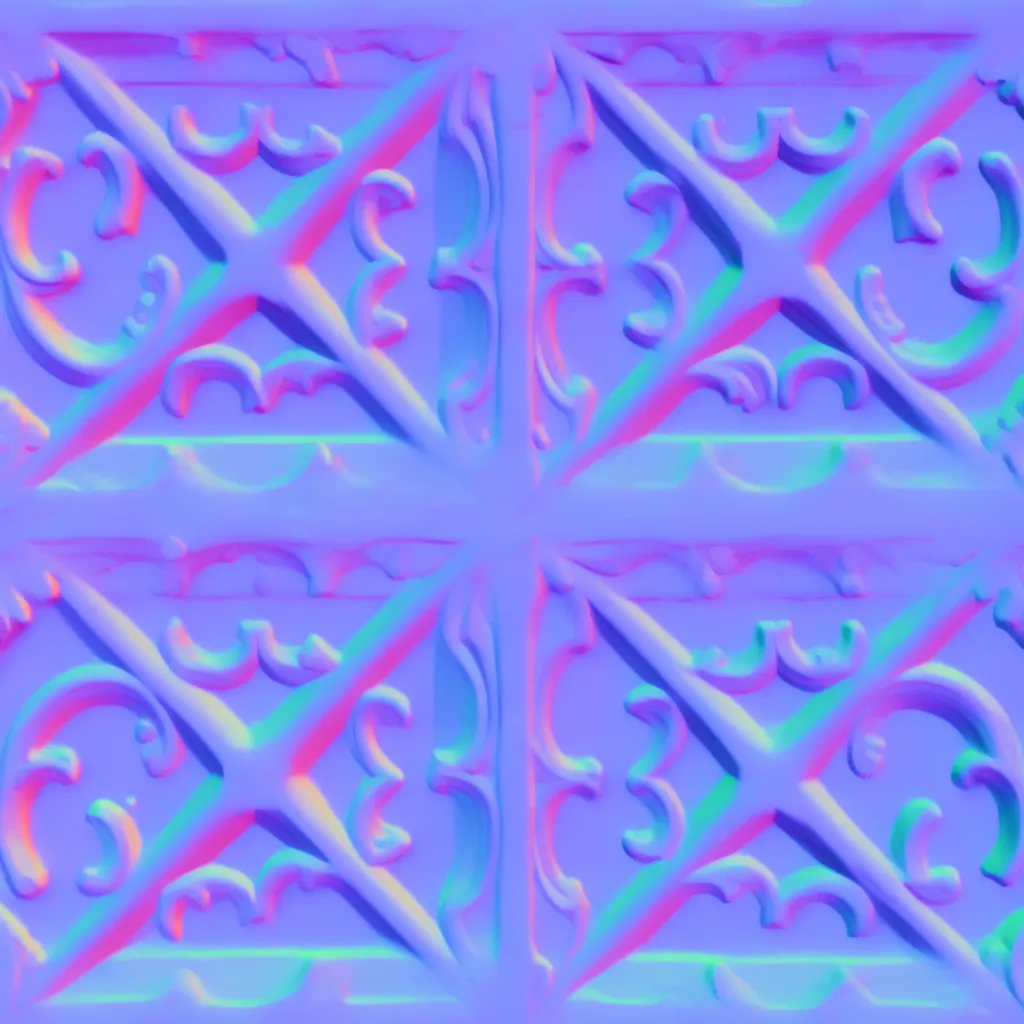

In order from top-left; render, CHORD, Marigold Normal, PATINA (Ours)

A renderer-made training set

PATINA’s dataset was built from CC0 material libraries, including AmbientCG, Poly Haven, and other public-domain sources. For each material, we collected the maps we cared about: basecolor/albedo, roughness, metalness, displacement, and normals.

Then, we rendered each material in dozens of different lighting scenarios using a custom Cook-Torrance BRDF renderer. That renderer implemented GGX/Trowbridge-Reitz microfacet distribution, a Schlick-GGX-style geometry term, Schlick Fresnel approximation, parallax occlusion mapping, and self-shadowing through height-field ray marching. Those renders became the “before” images, and the original PBR maps became the “after” targets for image-pair training. Importantly, this renderer would run in PyTorch, enabling massive dataset generation through GPU acceleration.

Using a nuanced renderer like this means a small material library can punch well above its weight. One material set can be rendered under many valid lighting conditions, so each asset becomes a generator for dozens of training pairs rather than just one.

A sequence of rendered frames using our BRDF renderer

How PATINA was trained

We trained each map modality individually rather than forcing everything into a single joint objective from day one. Training ran in three resolution stages — 512, 768, and 1024 — using a single pre-encoded text embedding per modality. In total, that worked out to roughly 1.5 million steps per modality, or about 7.5 million steps across all five maps.

Metalness was the odd one out. More than the other channels, it behaved like a segmentation problem before it behaved like a shading problem - so metalness required its own pre-training stage to first learn material segmentation before fine-grained prediction worked.

PATINA as a full material pipeline

To make the release useful outside of research demos, we also shipped specialized seamless versions of Z-Image Turbo Seamless Tiling and SeedVR2 Seamless Upscaling. These endpoints use wraparound fused tiled diffusion paths via MultiDiffusion, so generation, map detection and upscaling all preserve tile continuity instead of breaking it at the edges.

On top of those pieces sits the PATINA Material endpoint which uses seamless generation, seamless upscaling, and PATINA together to produce complete seamless tiling PBR materials up to 8K from text (with optional starting image,) starting at $0.08 for a full material set.

There is also the PATINA Material Extraction endpoint. This one goes a step further: give it an image and a simple label for the texture you want, and it identifies that texture, renders it flat, removes occluding elements, makes it seamlessly tileable, upscales it, and returns a full material set built from the same underlying PATINA pipeline.

What's next?

PATINA is a strong first step, but it also makes clear where the next gains are likely to come from.

The biggest one is data breadth. Our current training setup punches well above its weight because each material can be rendered under many different lighting conditions, but the underlying material library still skews toward dielectric surfaces. We are building a much larger material corpus to better represent a wider range of real-world materials, including more metallic, coated, translucent, and otherwise underrepresented classes. Better coverage should improve generalization and make the harder map modalities more reliable.

We are also working on a fine-tuned text-to-tiling-image model designed specifically for materials. Today, PATINA can be combined with seamless generation and upscaling endpoints to produce complete tiling material sets, but there is still a lot of room to make the very first image in that pipeline more natively material-aware. A dedicated text-to-tiling model should give us cleaner structure, better repeatability, and stronger material priors before map prediction even begins.

And finally, we are exploring additional map types beyond the current core set. Basecolor, roughness, metalness, displacement, and normals form a solid foundation, but they are not the whole story. We are interested in extending the system to support maps like luminance, opacity, and other specialized channels that can make materials more expressive and more useful in downstream rendering workflows.

References

Special Thanks

We want to give a special shoutout to Ubisoft La Forge. Their CHORD project — Chain of Rendering Decomposition for PBR Material Estimation from Generated Texture Images — directly inspired this work. CHORD makes a compelling case that material estimation becomes more tractable when you respect the structure of rendering itself, and that idea resonated deeply with how we approached PATINA.

Core rendering and material models. Cook & Torrance, A Reflectance Model for Computer Graphics (1982); Walter et al., Microfacet Models for Refraction through Rough Surfaces (2007); Schlick, An Inexpensive BRDF Model for Physically-based Rendering (1994); Tatarchuk, Practical Dynamic Parallax Occlusion Mapping (2005).

Backbones and geometry-related vision work. Esser et al., Scaling Rectified Flow Transformers for High-Resolution Image Synthesis (2024); Oquab et al., DINOv2: Learning Robust Visual Features without Supervision (2024); Ranftl et al., Vision Transformers for Dense Prediction (2021); Birkl et al., MiDaS v3.1: A model Zoo for Robust Monocular Relative Depth Estimation (2023); Ke et al., Repurposing Diffusion-Based Image Generators for Monocular Depth Estimation / Marigold (2024); Fu et al., GeoWizard: Unleashing the Diffusion Priors for 3D Geometry Estimation from a Single Image (2024); Bae & Davison, Rethinking Inductive Biases for Surface Normal Estimation / DSINE (2024).

Related material-estimation work. Boss & Lensch, Single Image BRDF Parameter Estimation with a Conditional Adversarial Network (2019); Vecchio et al., SurfaceNet: Adversarial SVBRDF Estimation from a Single Image (2021); Lopes et al., Material Palette: Extraction of Materials from a Single Image (2024); Ying et al., CHORD: Chain of Rendering Decomposition for PBR Material Estimation from Generated Texture Images (2025).

Seamless generation. Bar-Tal et al., MultiDiffusion: Fusing Diffusion Paths for Controlled Image Generation (2023).